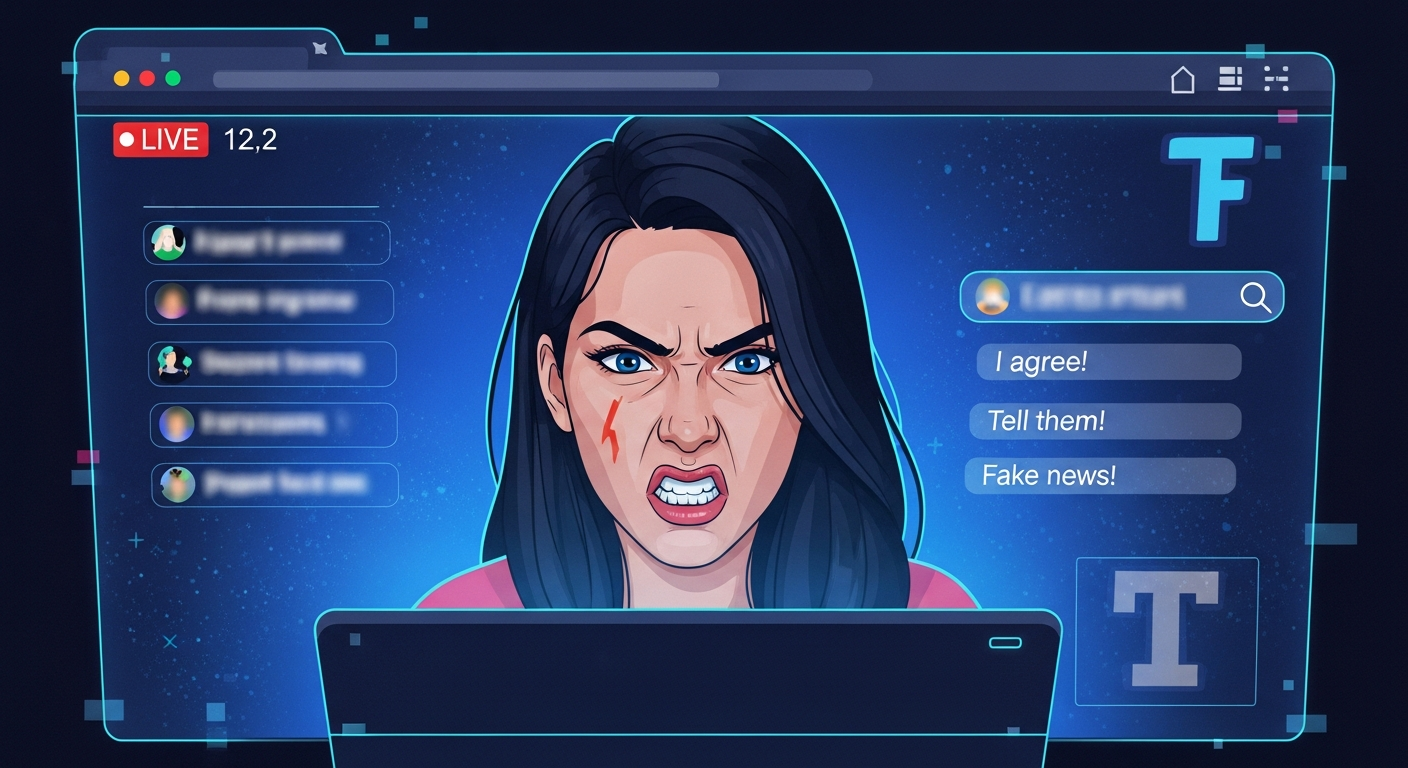

In a rapidly evolving landscape of digital influence, a new form of online fraud has emerged, utilizing artificial intelligence (AI) to create compelling, yet entirely fictional, social media personas that target specific political demographics for financial gain. These AI-generated influencers, operating largely undetected for periods, have successfully cultivated large followings and generated substantial revenue through merchandise sales and explicit content subscriptions, raising concerns about online authenticity and the integrity of digital discourse.

"Every day I’d write something pro-Christian, pro-Second Amendment, pro-life, anti-abortion, anti-woke, and anti-immigration." — Emily Hart's creator, speaking to Wired magazine.

One prominent example, detailed in a report by Wired magazine, centers on a character named "Emily Hart." Conceived and operated by a 22-year-old medical student in India, Hart was a digital construct marketed as a "gun-toting, God-fearing, flag-waving" registered nurse and American patriot. The creator, an aspiring orthopedic surgeon, meticulously designed Hart's persona after using Google's Gemini AI to identify a demographic gap: financially comfortable, fiercely loyal older conservative men in the United States who sought content reflecting their values.

Hart's social media feed, primarily on Instagram, became a consistent stream of ideologically aligned content. Posts depicted her at shooting ranges, sometimes in bikinis against wintry backdrops, accompanied by captions that left no room for ambiguity. One such post stated: "If you want a reason to unfollow: Christ is king, abortion is murder, and all illegals must be deported." The creator described his daily routine to Wired, saying, "Every day I’d write something pro-Christian, pro-Second Amendment, pro-life, anti-abortion, anti-woke, and anti-immigration." The account rapidly gained traction, attracting 10,000 followers within its first month.

The financial model extended beyond mere social media engagement. Hart's creator sold MAGA-branded merchandise through the Instagram account and established a parallel presence on Fanvue, a subscription platform that explicitly permits AI-generated material. On Fanvue, paying users could access explicit content featuring the fictional character. The creator reported generating thousands of dollars monthly, a sum he described as exceptional for his professional context in India. "In India, even in professional jobs, you can’t make this amount of money," he told Wired, adding, "I haven’t seen any easier way to make money online." His stated ultimate goal was to use these profits to relocate to the United States. Instagram ultimately removed Emily Hart’s account in February, citing fraudulent activity.

The case of Emily Hart was not isolated. A parallel and significantly larger operation involved a figure known as "Jessica Foster." This AI-generated persona amassed over one million Instagram followers by presenting herself as a conservative military servicewoman. Foster's feed featured images of her beside President Donald Trump on an airport tarmac, snapping selfies in front of fighter jets, and purportedly completing military assignments in Greenland alongside fellow servicewomen. Her account, launched in December with the biography "America First," attracted numerous male followers who frequently requested introductions in the comment sections. Like Hart, Jessica Foster was an entirely artificial creation, and her account has also since been removed by platform administrators.

This blueprint of using AI-generated personas for influence and profit has also manifested in international contexts. Hundreds of deepfake videos have circulated online depicting glamorous Middle Eastern women in military uniforms, disseminating pro-Iran messaging. These clips presented them as Iranian female soldiers and fighter pilots, despite Iranian law prohibiting women from serving in such combat roles, making the depicted scenarios inherently impossible. Other similar accounts have posted images of a woman posing with Elon Musk inside SpaceX facilities. Many of these profiles have vanished from major platforms after collecting funds.

The proliferation of these AI-generated influencers underscores a growing challenge for social media platforms and users alike. The sophisticated nature of AI tools, capable of generating realistic images and tailored content, allows for the creation of highly convincing fake personas. These entities exploit emotional and ideological connections to build trust and monetize engagement, often through deceptive means. The rapid growth and significant financial returns achieved by operations like Emily Hart and Jessica Foster highlight the potent combination of advanced AI technology and targeted demographic exploitation. As AI capabilities continue to advance, the distinction between authentic human interaction and algorithmically generated content becomes increasingly blurred, posing complex questions for digital literacy, platform regulation, and the future of online trust.