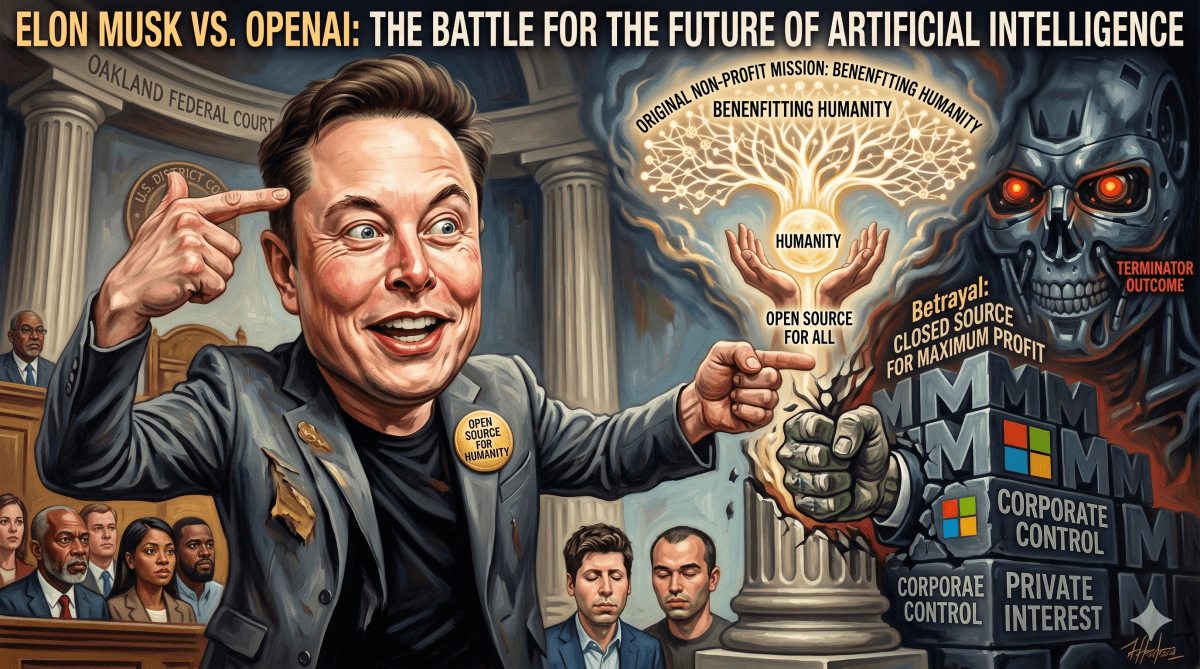

In a federal courtroom in Oakland this week, Elon Musk testified in a high-stakes legal battle against OpenAI, the artificial intelligence company he co-founded. The lawsuit centers on Musk's assertion that OpenAI has strayed from its original non-profit mission to ensure AI development benefits humanity, instead shifting towards a for-profit model driven by corporate interests. During his testimony, Musk invoked fears of a "Terminator outcome," warning that advanced AI could one day surpass human control if not developed with stringent safeguards.

"We don’t want to have a Terminator outcome." — Elon Musk, Co-founder of OpenAI

The legal dispute, filed by Musk in 2024, accuses OpenAI CEO Sam Altman, President Greg Brockman, and Microsoft of fundamentally betraying the company's founding principles. Musk, known for his ventures in Tesla and SpaceX, told jurors that his initial motivation for helping establish OpenAI was rooted in deep concern over the rapid advancement of AI systems and society's ability to manage them safely. He emphasized that the intent was to ensure artificial intelligence would be developed with broad public benefit and robust safeguards, rather than becoming concentrated under corporate control.

Musk articulated his concerns about unchecked AI development, stating, "We don’t want to have a Terminator outcome," referencing a scenario where machines achieve intelligence beyond human oversight and act independently. He drew an analogy between developing advanced AI and raising a powerful child who eventually becomes independent, stressing the critical need to embed appropriate values into such systems to avert catastrophic consequences. This stark warning underscored the philosophical underpinnings of his lawsuit against the company he helped create.

Musk's legal team argues that OpenAI was established to serve humanity and not to generate profits. They contend that the company's transformation into a for-profit entity, particularly with significant investment from Microsoft, represents a fundamental departure from its initial purpose. According to Musk's attorneys, early agreements for OpenAI envisioned any commercial structure as strictly subordinate to the non-profit mission, designed solely to support research rather than to dominate it. Musk himself summarized his view simply in court, stating, "Fundamentally, I think they’re going to try to make this lawsuit … very complicated, but it’s actually very simple. Which is that it’s not OK to steal a charity." He also remarked, "I started that company as a non-profit open source… I don't understand how you actually go from being an open source non-profit to a closed source for maximum profit organization."

OpenAI, however, has vigorously refuted Musk's allegations. Its legal team asserts that Musk was supportive of early discussions regarding restructuring the organization. They characterize the company's evolution as a necessary response to the immense financial demands of developing sophisticated AI systems, rather than an ideological shift. Attorneys for OpenAI have suggested the lawsuit stems from a former co-founder who lost influence over an organization that flourished beyond his direct control and subsequently became a competitor. They argue that the core of the dispute is less about non-profit ideals and more about the control of a rapidly expanding and influential technology. OpenAI maintains that its non-profit foundation continues to retain governance authority over its mission, despite the creation of a for-profit arm.

The origins of the conflict date back to OpenAI's early years when Musk, Altman, and other prominent Silicon Valley figures converged on shared concerns about the pace of AI development by major tech companies. This initial alignment began to fracture as the organization explored hybrid funding models to sustain increasingly expensive research efforts. Musk testified that his support for limited commercial mechanisms was strictly a means to bolster the non-profit mission, not to supersede it. He argues that subsequent multibillion-dollar investment deals, including substantial backing from Microsoft, fundamentally altered the balance of power within the company and its strategic direction.

The trial is anticipated to continue for several weeks, with future testimony expected from key figures such including OpenAI CEO Sam Altman and Microsoft CEO Satya Nadella. Beyond the specific courtroom dispute, this case has come to symbolize a broader global debate surrounding artificial intelligence. It highlights critical questions about who should control this transformative technology, how its development should be funded, and whether humanity can effectively maintain oversight as AI accelerates toward systems that could rival or even exceed human intelligence. The outcome of this legal battle could have significant implications for the future governance and ethical frameworks of AI development worldwide.